Use these Inter 2nd Year Maths 2A Formulas PDF Chapter 9 Probability to solve questions creatively.

Intermediate 2nd Year Maths 2A Probability Formulas

→ An experiment which can be repeated any number of times under essentially identical conditions and which is associated with a set of known results, is called a random experiment or trail if the result of any single repetition of the experiment ism certain and is any one of the associated set.

→ A combination of elementary events in a trial is called an event.

→ The list of all elementary events in a trail is called list of exhaustive events.

→ Elementary events are said to be equally likely if they have the same chance of happening.

![]()

→ If there are n exhaustive equally likely elementary events in a trail and m of them are

favourable to an event A, then \(\frac{m}{n}\) is called the probability of A. It is denoted by P(A).

→ P(A) = \(\frac{\text { Number of favourable cases (outcomes) with respect } A}{\text { Number of all cases (outcomes) of the experiment }}\)

→ The set of all possible outcomes (results) in a trail is called sample space for the trail. It is denoted by ‘S’. The elements of S are called sample points.

→ Let S be a sample space of a random experiment. Every subset of S is called an event.

→ Let S be a sample space. The event Φ is called impossible event and the event S is called certain event in S.

→ Two events A, B in a sample space S are said to be disjoint or mutually exclusive if A ∩ B = Φ

→ Two events A, B in a sample space S are said to be exhaustive if A ∪ B = S.

→ Two events A, B in a sample space S are said to be complementary if A ∪ B = S, A ∩ B = Φ. The complement B of A is denoted by Ā (or) Ac.

→ Let S be a finite sample space. A real valued function P: p(s) → R is said to be a probability function on S if (i) P(A) ≥ 0 ∀ A ∈ p(s) (ii) p(s) = 1

→ A, B, ∈ p(s), A ∩ B = Φ ⇒ P (A ∪ B) = P(A) + P(B). Then P is called probability function and for each A ∈ p(s), P(A) is called the probability of A.

![]()

→ If A is an event in a sample space S, then 0 ≤ P(A) ≤ 1.

→ If A is an event in a sample space S, then the ratio P(A) : P̄(Ā) is called the odds favour to A and P̄(A): P(A) is called the odds against to A.

→ If A, B are two events in a sample space S, then P(A ∪ B) = P(A) + P(B) – P(A ∩ B)

→ If A, B are two events in a sample space, then P(B – A) = P(B) – P(A ∩ B) and P(A – B) = P(A) – P(A ∩ B) .

→ If A, B, C are three events in a sample space S, then

P(A ∪ B ∪ C) = P(A) + P(B) + P(C) – P(A ∩ B) – P(B ∩ C) – P(C ∩ A) + P(A ∩ B ∩ C).

→ If A, B are two events in a sample space then the event of happening B after the event A happening is called conditional event It is denoted by B/A.

→ If A, B are two events in a sample space S and P(A) ≠ 0, then the probability of B after the event A has occured is called conditional probability of B given A. It is denoted by P\(\left(\frac{B}{A}\right)\)

→ If A, B are two events in a sample space S such that P(A) ≠ o then \(P\left(\frac{B}{A}\right)=\frac{n(A \cap B)}{n(A)}\)

→ Let A, B be two events in a sample space S such that P(A) ≠ 0, P(B) ≠ 0, then

- \(P\left(\frac{A}{B}\right)=\frac{P(A \cap B)}{P(B)}\)

- \(P\left(\frac{B}{A}\right)=\frac{P(A \cap B)}{P(A)}\)

→ Multiplication theorem on Probability: If A and B are two events of a sample space 5 and P(A) > 0, P(B) > 0 then P(A n B) = P(A), P(B/A) = P(B). P(A/B).

→ Two events A and B are said to be independent if P(A ∩ B) = P(A). P(B). Otherwise A, B are said to be dependent.

![]()

→ Bayes’ theorem : Suppose E1, E2 ……… En are mutually exclusive and exhaustive events of a Random experiment with P(Ei) > 0 for ī = 7, 2, ……… n in a random experiment then we have

\(p\left(\frac{E_{k}}{A}\right)\) = \(\frac{P\left(E_{k}\right) P\left(\frac{A}{E_{k}}\right)}{\sum_{i=1}^{n} P\left(E_{i}\right) P\left(\frac{A}{E_{i}}\right)}\) for k = 1, 2 …… n.

Random Experiment:

If the result of an experiment is not certain and is any one of the several possible outcomes, then the experiment is called Random experiment.

Sample space:

The set of all possible outcomes of an experiment is called the sample space whenever the experiment is conducted and is denoted by S.

Event:

Any subset of the sample space ‘S’ is called an Event.

Equally likely Events:

A set of events is said to be equally likely if there is no reason to expect one of them in preference to the others.

Exhaustive Events:

A set of events is said to be exhaustive of the performance of the experiment always results in the occurrence of at least one of them.

Mutually Exclusive Events:

A set of events is said to the mutually exclusive if happening of one of them prevents the happening of any of the remaining events.

Classical Definition of Probability:

If there are n mutually exclusive equally likely elementary events of an experiment and m of them are favourable to an event A then the probability of A denoted by P(A) is defined as min.

![]()

Axiomatic Approach to Probability:

Let S be finite sample space. A real valued function P from power set of S into R is called probability function if

P(A) ≥ 0 ∀ A ⊆ S

P(S) = 1, P(Φ) = 0;

(3) P(A ∪ B) = P(A) + P(B) if A ∩ B = Φ. Here the image of A w.r.t. P denoted by P(A) is called probability of A.

Note:

- P(A) + P(A̅) = 1

- If A1 ⊆ A2, then P(A1) < P(A2) where A1, A2 are any two events.

Odds in favour and odds against an Event:

Suppose A is any Event of an experiment. The odds in favour of Event A is P(A̅) : P(A). The odds against of A is P(A̅) : P(A).

Addition theorem on Probability:

If A, B are any two events in a sample space S, then P(A ∪ B) = P(A) + P(B) – P(A ∩ B).

If A and B are exclusive events

- P(A ∪ B) = P(A) + P(B)

- P(A ∪ B ∪ C) = P(A) + P(B) + P(C) – P(A ∩ B) P(A ∩ C) – P(B ∩ C) + P(A ∩ B ∩ C).

Conditional Probability:

If A and B are two events in sample space and P(A) ≠ 0. The probability of B after the event A has occurred is called the conditional probability of B given A and is denoted by P(B/A).

P(B/A) = \(\frac{\mathrm{n}(\mathrm{A} \cap \mathrm{B})}{\mathrm{n}(\mathrm{A})}=\frac{\mathrm{P}(\mathrm{A} \cap \mathrm{B})}{\mathrm{P}(\mathrm{A})}\)

Similarly

n(A ∩ B) = \(\frac{\mathrm{n}(\mathrm{A} \cap \mathrm{B})}{\mathrm{n}(\mathrm{B})}=\frac{\mathrm{P}(\mathrm{A} \cap \mathrm{B})}{\mathrm{P}(\mathrm{B})}\)

Independent Events:

The events A and B of an experiment are said to be independent if occurrence of A cannot influence the happening of the event B.

i.e. A, B are independent if P(A/B) = P(A) or P(B/A) = P(B).

i.e. P(A ∩ B) = P(A) . P(B).

Multiplication Theorem:

If A and B are any two events in S then

P(A ∩ B) = P(A) P(B/A) if P(A)≠ 0.

P (B) P(A/B) if P(B) ≠ 0.

The events A and B are independent if

P(A ∩ B) = P(A) P(B).

A set of events A1, A2, A3 … An are said to be pair wise independent if

P(Ai n Aj) = P(Ai) P(Aj) for all i ≠ J.

![]()

Theorem:

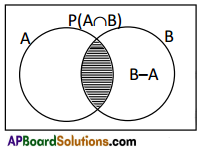

Addition Theorem on Probability. If A, B are two events in a sample space S

Then P(A ∪ B) = P(A) + P(B) – P(A ∩ B)

Proof:

FRom the figure (Venn diagram) it can be observed that

(B – A) ∪ (A ∩ B) = B, (B – A) ∩ (A ∩ B) = Φ

∴ P(B) = P[(B – A) ∪ (A ∩ B)]

= P(B – A) + P(A ∩ B)

⇒ P(B – A) = P(B) – P(A ∩ B) ………(1)

Again from the figure, it can be observed that

A ∪ (B – A) = A ∪ B, A ∩ (B – A) = Φ

∴ P(A ∪ B) = P[A ∪ (B – A)]

= P(A) + P(B – A)

= P(A) + P(B) – P(A ∩ B) since from (1)

∴ P(A ∪ B) = P(A) + P(B) – P(A ∩ B)

Theorem:

Multiplication Theorem on Probability.

Let A, B be two events in a sample space S such that P(A) ≠ 0, P(B) ≠ 0, then

i) P(A ∩ B) = P(A)P\(\left(\frac{B}{A}\right)\)

ii) P(A ∩ B) = P(B)P\(\left(\frac{A}{B}\right)\)

Proof:

Let S be the sample space associated with the random experiment. Let A, B be two events of S show that P(A) ≠ 0 and P(B) ≠ 0. Then by def. of confidential probability.

P\(\left(\frac{B}{A}\right)=\frac{P(B \cap A)}{P(A)}\)

∴ P(B ∩ A) = P(A)P\(\left(\frac{B}{A}\right)\)

Again, ∵P(B) ≠ 0

\(\left(\frac{\mathrm{A}}{\mathrm{B}}\right)=\frac{\mathrm{P}(\mathrm{A} \cap \mathrm{B})}{\mathrm{P}(\mathrm{B})}\)

∴ P(A ∩ B) = P(B) . P\(\left(\frac{\mathrm{A}}{\mathrm{B}}\right)\)

∴ P(A ∩ B) = P(A) . P\(\left(\frac{B}{A}\right)\) = P(B).P\(\left(\frac{\mathrm{A}}{\mathrm{B}}\right)\)

![]()

Baye’s Theorem or Inverse probability Theorem

Statement:

If A1, A2, … and An are ‘n’ mutually exclusive and exhaustive events of a random experiment associated with sample space S such that P(Ai) > 0 and E is any event which takes place in conjuction with any one of Ai then

P(Ak/E) = \(\frac{P\left(A_{k}\right) P\left(E / A_{k}\right)}{\sum_{i=1}^{n} P\left(A_{i}\right) P\left(E / A_{i}\right)}\), for any k = 1, 2, ………. n;

Proof:

Since A1, A2, … and An are mutually exclusive and exhaustive in sample space S, we have Ai ∩ Aj = for i ≠ j, 1 ≤ i, j ≤ n and A1 ∪ A2 ∪…. ∪ An = S.

Since E is any event which takes place in conjunction with any one of Ai, we have

E = (A1 ∩ E) ∪ (A2 ∩ E) ∪ …………….. ∪(An ∩ E).

We know that A1, A2, ……… An are mutually exclusive, their subsets (A1 ∩ E), (A2 ∩ E) , … are also

mutually exclusive.

Now P(E) = P(E ∩ A1) + P(E ∩ A2) + ………. + P(E ∩ An) (By axiom of additively)

= P(A1)P(E/A1) + P(A2)P(E/A2) + ………… + P(An)P(E/An)

(By multiplication theorem of probability)

= \(\sum_{i=1}^{n}\)P(Ai)P(E/Ai) …………..(1)

By definition of conditional probability

P(Ak/E) = \(\frac{P\left(A_{k} \cap E\right)}{P(E)}\) for

= \(\frac{P\left(A_{k}\right) P\left(E / A_{k}\right)}{P(E)}\)

(By multiplication theorem)

= \(\frac{P\left(A_{k}\right) P\left(E / A_{k}\right)}{\sum_{i=1}^{n} P\left(A_{I}\right) p\left(E / A_{i}\right)}\) from (1)

Hence the theorem